Nathan Dumlao on Unsplash, Illustration: Katie Taylor; Davidcohen on Unsplash

Conspiracy theories are an all-too prevalent part of modern society, but why exactly are these unfounded theories so common?

If you want to spend an interesting but psychologically gruelling couple of hours, visit Reddit’s r/conspiracy subreddit, which acts as a kind of drift net for the internet’s collective paranoia. Whatever fears and prejudices swim in the murky depths of the human subconscious, they get stuck here, flopping, wriggling, and gasping for oxygen.

“I think the DNC killed the wrestler Kamala,” one post says. (Quick fact check: 6’7” WWE wrestler Kamala, a.k.a. James Harris, actually died of cardiac arrest in 2020 as a result of COVID-19.) “Dominion voting machines connected to Clinton Global Initiative!” announces another, before adding, “Can’t wait for ‘fact checkers’ to say this is somehow misleading.” (Quick fact check: this is true. In fact it’s so uncontroversially true that the Clinton Global Initiative published the news itself… on its own website). Some posts hint at r/conspiracy’s more wholesome, ‘no-way-that’s-a-flock-of-geese’ origins, which seem almost endearing now against the partisan hellscape that is 2020: “One of the largest conspiracies right now is all the Reddit shills begging for this sub to go back to talking about Bigfoot.”

This is one of the fundamental paradoxes faced by would-be conspiracy theorists: the more people who believe the ‘truth’, the less true it becomes. It is the fate of all widespread conspiracy theories to themselves become the subject of conspiracy theories, like bands who become so cool that their mainstream popularity and universal coolness somehow make them inherently un-cool (see: U2, Coldplay, etc.).

But the Bigfoot guy has a point: conspiracy theories don’t feel kooky and harmless anymore.

“Conspiracies feel more real now because of consequences,” says Micah Goldwater, a Senior Lecturer in Psychology from The University of Sydney. “In the old days, if you thought we faked the moon landing, nothing really happened. The world didn’t operate any differently. But now we have the pandemic, and our response depends on cooperation and collective action, agreed upon by everyone. It’s how we stay safe. So when people reject these behaviours because of misinformation, it’s a much bigger deal.”

Micah Goldwater (Photo by Alyx Gorman)

Goldwater is just one of dozens of psychological researchers around the world trying to answer the sort of thorny, existential questions that used to be the domain of toga-wearing moral philosophers. What is truth? How do we, as humans, measure reality? Why do people believe the things they do, and — equally important — how can we get them to stop? It’s a broad field that Goldwater calls “conspiracy science”.

Reddit’s r/conspiracy subreddit doesn’t tell you much about reality, which I probably should have expected before wading into the swamp, but ironically it does explain a lot about truth. We like to imagine that ‘reality’ and ‘truth’ are the same thing, but if the last few years have taught us anything, it’s that the simple question “what is true?” is not simple at all, and may in fact be gnarlier and more sinister than anyone previously imagined. When someone like ex-presidential counsellor Kellyanne Conway deploys a phrase like “alternative facts”, she might (unwittingly) be putting her manicured finger on the whole relativity shit-show facing modern political discourse: maybe your truth is different from mine.

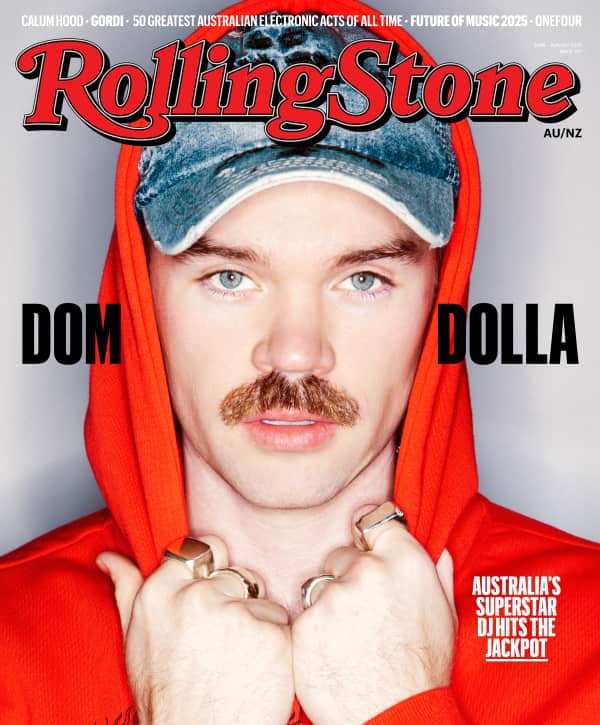

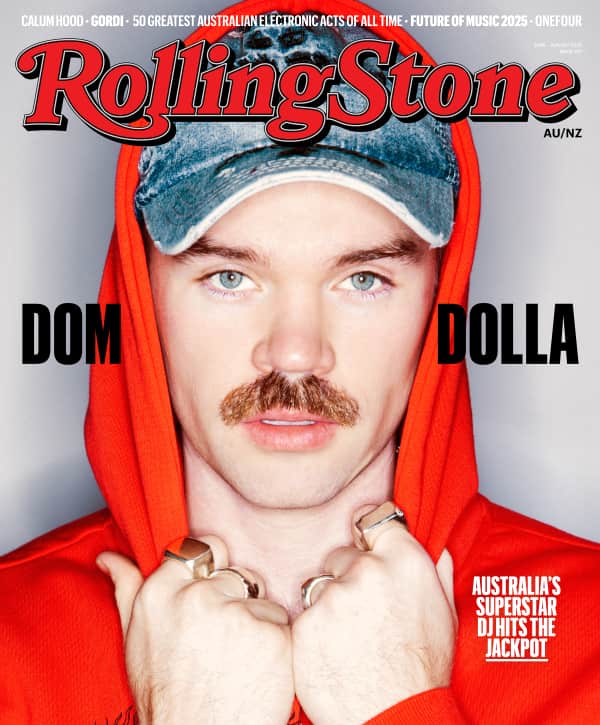

Love Music?

Get your daily dose of everything happening in Australian/New Zealand music and globally.

Edward Kelly is an ordinary out-of-work DJ from Queensland who runs the Australian-based Facebook group ‘The Subconscious Truth Network’ (STN) and believes that COVID-19 was probably made by the Chinese government in a lab. His story is pretty typical. “I’ve always been one to question things,” he says, “why things aren’t right, you know? I was just a normal dude on Facebook, and then I saw a video going around of people smashing their TVs. That attracted a lot of people like myself with hopes of freedom, you know, ‘We are the 99%. We deserve freedom and honesty,’ that sort of thing.”

“Conspiracies feel more real now because of consequences.”

What Kelly had actually seen was former reality TV star and self-styled Australian ‘truther’, Fanos Panayides, whose Facebook group ‘99% Unite Worldwide’ gained thousands of members in the early days of the pandemic and encouraged followers to smash their TVs in protest over media organisations who were—quote—“telling us what to think”.

Panayides and ‘99% Unite’ represent a complex grab bag of popular conspiracy theories. They were regular attendees at Melbourne’s anti-lockdown protests in May 2020, where homemade A3 posters spread the enlightened truth about everything from #QAnon (possibly the world’s hottest conspiracy theory, in which a cabal of Satan-worshipping paedophiles were plotting to overthrow Donald Trump) to #RitualChildSacrifice (see: #QAnon) to #TeslaFreeEnergy (the belief that unlimited, pollution-free energy tech is being suppressed by authorities) to #LuciferTelescope (a giant telescope in Arizona, owned by the Vatican, which allegedly seeks out aliens in order to forge some sort of intergalactic Catholic alliance).

Kelly started moderating for ‘99% Unite’, but quickly became suspicious about the group’s motives and some of its less evidence-based theories. “When you’re an admin you find that people aren’t who they say they are,” he says. “These guys had their masters in linguistic programming and mind control and hypnotism. They were selling fear and stopping people thinking properly. So I got involved and started my own page.”

Considering he runs a Facebook group in which members debate (among other things) the lessons we can learn from the lost city of Atlantis, Edward Kelly comes across as surprisingly thoughtful and conscientious. You could call him a conspiracy moderate. “I’m not a close-minded person,” he says. “Most people aren’t like that. You’ve got a bunch of people calling mask-wearers sheep, but then blindly following some truther. I’m keeping my possibilities open.”

Kelly’s social media schism is pretty typical and seems to happen all the time. If there is a pattern behind conspiracies, it’s a tendency for conspiracy groups to splinter into smaller sub-conspiracies, which in turn split off into even smaller sub-sub-conspiracies — kind of like amoeba cells, or Mormon fundamentalist sects — because the only downside of attracting naturally suspicious and proudly independent people is that, eventually, they’ll become suspicious about you.

Goldwater says this happens because conspiracy theories have as much to do with social dynamics and belonging as they do with what and how we think. They tend to attract people who crave meaning, certainty, narrative, and, most of all, community. Every Them needs an Us. “We’ve always known that shared beliefs are a big part of any group membership,” he says, “and all conspiracy theories have a big social component. Some research has found that many Flat Earthers, for example, are actually just there to make friends.”

It’s this social component that makes rational debunking almost pointless—challenging what people think is hard enough, but challenging how they feel is, as Goldwater puts it, “closer to a full-blown intervention.”

Intelligence also doesn’t correlate in the way you might think. It would be comforting to imagine that only stupid people believed stupid things, but research shows that not only does intelligence not safeguard against conspiracies, but it might also make people more vulnerable to misinformation—because intelligent people are better at creatively rationalising their own beliefs.

A 2019 study from Duke University, for example, found that among conservative white Republicans, the so-called Barack Obama ‘Birther’ conspiracy was actually believed most strongly by participants with the greatest political knowledge. Even worse, the more educated a participant, the less likely they were to update their beliefs in the face of counter-evidence, which kind of flies in the face of Charles Bukowski’s famous mic-drop line: “The problem with the world is that the intelligent people are full of doubts, while the stupid ones are full of confidence.”

The biggest problem debunkers face is that once misinformation sticks in the human brain, it’s very, very hard to dislodge. Ideas can metastasize to your frontal lobe. It’s no coincidence that conspiracy scientists and cognitive psychs borrow the language of epidemiology when talking about this stuff, because conspiracy theories and viruses attack the human system in very similar ways (an irony that wasn’t lost on anyone during COVID-19 anti-mask protests): they both spread virulently, they both mutate and evolve when attacked, and both can respond well to ‘inoculation’.

The biggest problem debunkers face is that once misinformation sticks in the human brain, it’s very, very hard to dislodge.

It works like this. According to The Debunking Handbook — a 2020 paper co-authored by 22 cognitive scientists from all over the world — by exposing people to weakened forms of misinformation (and refuting them properly) you can kind of breed ‘cognitive antibodies’. “For example, by explaining to people how the tobacco industry rolled out ‘fake experts’ in the Sixties to create a chimerical scientific ‘debate’ about the harms from smoking, people become more resistant to subsequent persuasion attempts using the same misleading argumentation in the context of climate change.”

Three researchers, John Cook, Ullrich Ecker, and Stephan Lewandowsky, actually tried this experiment for real. Before debunking several climate conspiracies head-on, they showed participants a report on the tobacco industry’s use of fake experts and misinformation to disprove the link between smoking and lung cancer. And it actually worked. By first reading about the tobacco industry’s shady lobbying tactics, participants, even the hard-core dyed-in-the-wool Tories, became more suspicious about climate denialism.

This is a potentially game-changing idea and might be the difference between a future of reasoned human debate and some schizoid world where facts are more or less optional and everyone lives inside subjective little reality bubbles. If we can teach people to think more critically beforehand, to question assumptions, check their sources, do independent research, fine-tune their BS detectors and objectively measure things like credibility, we’ll go a long way to beating conspiracy theories before they even get started.

Photo by Jonathan Borba on Unsplash

Of course the ironic catch — and you might notice a pattern of dark irony here that would make Kafka cry — is that conspiracy theorists promote these exact same techniques in the name of misinformation. Don’t believe what you’re told. Question authority. Think for yourself. Dig a little deeper. In the same way that dictators always assume control under the guise of ‘freedom’, conspiracy theorists spread misinformation in the name of enlightenment. And they do the hard work, too. Chatting to Edward Kelly, it quickly becomes obvious that Subconscious Truth Network members probably spend way more time digging for ‘facts’, following up leads, researching ideas, hunting for primary sources, and questioning authority than I do. Their perspective is just whacked. It’s like an irrational house built from rational components.

“This is why low health literacy doesn’t always correlate to health-related conspiracies,” says Goldwater. “Generally with pseudo-sciences, people have a bunch of elements that are actually true, they just put them together wrong. Like anti-vaxxers who say that Big Pharma is this huge corrupting force—they wouldn’t say they’re anti-science, they just believe certain forces are corrupting the science. And there are a lot of ways in which that’s true…just not in the way of vaccines causing autism. That’s the wrong application of a well-deserved scepticism.”

Of course, once an idea takes hold, the whole thing becomes way messier. If you’ve ever tried to argue with a hardcore anti-vaxxer, QAnon disciple, or climate change sceptic, you’ll know that debunking is actually incredibly, maddeningly, tear-your-own-hair-out difficult. “Simple corrections on their own are unlikely to fully unstick misinformation,” The Handbook says, “and tagging something as questionable is not enough in the face of repeated exposures.” Essentially: the more times we hear a lie, and the more credibility we invest in the liar, the harder it becomes to dislodge.

“Just remember, the real conspiracy is to keep us divided.”

The Handbook recommends a four-step debunking process, which (let’s be frank) is essentially a modern-day anti-brainwashing guide. First, lead with a fact (a “clear, pithy, and sticky” fact, if you have one available). Next, warn gently about the myth or misinformation, and explain the central fallacy behind it. And lastly, repeat the fact again, as many times as possible, and make sure it provides an alternative explanation for whatever you’re talking about. You have to sell a coherent narrative. And this isn’t always easy. For example, the traditional anti-vax claim that vaccines cause autism is, psychologically speaking, particularly tricky to debunk, because scientists don’t exactly know what causes autism. It’s hard to break down and rebuild someone’s entire world view when your most honest counterargument is, “We don’t know what’s right—but we know that you’re wrong.”

Here are some other debunking techniques that don’t work. Simple retractions (e.g. “that’s just not true” or “plesiosaurs don’t live in Scottish lochs”) make people defensive and unreceptive to new information. Offering facts that are more complicated than the initial misinformation is also ineffective (The Handbook recommends “pithy” facts over “technical language”). There’s even a risk you’ll make things much worse, which is known as the ‘Backfire Effect’: essentially, by repeating the myth over and over while debunking it, you reinforce the misinformation and drive the theorist even further away from reality, which is kind of like lecturing someone on the evils of processed sugar while wafting a chocolate cake under their nose.

This sort of research used to be used for fighting fringe beliefs and reprogramming cult victims, not debunking mainstream political theories. It’s tempting to think that we’re more credulous now, or maybe even less intelligent, but Goldwater says human beings have been battling these particular mental gremlins for centuries. We suffer from the same cognitive biases we always did—they’ve just become easier to exploit. “There have always been snake-oil salesmen,” he says. “People think we’re more gullible now, but there’s really no reason to think that. It’s just that, because of the consequences, conspiracy theories have become extremely salient. People are now going out and killing people on behalf of ideas.”

To be fair, a few users on r/conspiracy seem to be waking up to the dangers of running multiple realities side by side. The subreddit feels like it could be slowly devolving into a meta-conspiracy about the nature of conspiracies (and theories thereof). One post, which stands out like a solitary oboe at a Metallica concert, sums it up pretty well:

“Just remember, the real conspiracy is to keep us divided. Keep fuelling hate and division when it suits your fancy. You are stepping right into their trap.”